Databricks Certified Data Engineer Professional Exam

Last Update Jun 10, 2026

Total Questions : 202

To help you prepare for the Databricks-Certified-Professional-Data-Engineer Databricks exam, we are offering free Databricks-Certified-Professional-Data-Engineer Databricks exam questions. All you need to do is sign up, provide your details, and prepare with the free Databricks-Certified-Professional-Data-Engineer practice questions. Once you have done that, you will have access to the entire pool of Databricks Certified Data Engineer Professional Exam Databricks-Certified-Professional-Data-Engineer test questions which will help you better prepare for the exam. Additionally, you can also find a range of Databricks Certified Data Engineer Professional Exam resources online to help you better understand the topics covered on the exam, such as Databricks Certified Data Engineer Professional Exam Databricks-Certified-Professional-Data-Engineer video tutorials, blogs, study guides, and more. Additionally, you can also practice with realistic Databricks Databricks-Certified-Professional-Data-Engineer exam simulations and get feedback on your progress. Finally, you can also share your progress with friends and family and get encouragement and support from them.

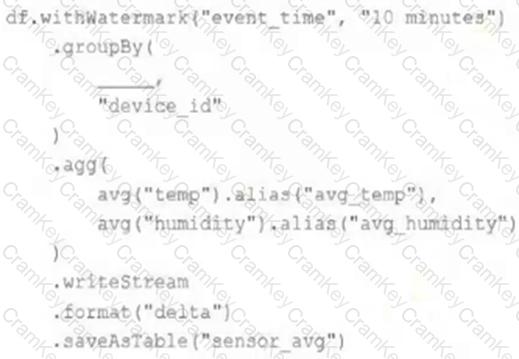

A junior data engineer has been asked to develop a streaming data pipeline with a grouped aggregation using DataFrame df . The pipeline needs to calculate the average humidity and average temperature for each non-overlapping five-minute interval. Events are recorded once per minute per device.

Streaming DataFrame df has the following schema:

" device_id INT, event_time TIMESTAMP, temp FLOAT, humidity FLOAT "

Code block:

Choose the response that correctly fills in the blank within the code block to complete this task.

A data engineering team is migrating off its legacy Hadoop platform. As part of the process, they are evaluating storage formats for performance comparison. The legacy platform uses ORC and RCFile formats. After converting a subset of data to Delta Lake , they noticed significantly better query performance. Upon investigation, they discovered that queries reading from Delta tables leveraged a Shuffle Hash Join , whereas queries on legacy formats used Sort Merge Joins . The queries reading Delta Lake data also scanned less data.

Which reason could be attributed to the difference in query performance?

Where in the Spark UI can one diagnose a performance problem induced by not leveraging predicate push-down?

A production cluster has 3 executor nodes and uses the same virtual machine type for the driver and executor.

When evaluating the Ganglia Metrics for this cluster, which indicator would signal a bottleneck caused by code executing on the driver?