| Exam Name: | Databricks Certified Data Engineer Associate Exam | ||

| Exam Code: | Databricks-Certified-Data-Engineer-Associate Dumps | ||

| Vendor: | Databricks | Certification: | Databricks Certification |

| Questions: | 176 Q&A's | Shared By: | leyla |

Which of the following commands will return the number of null values in the member_id column?

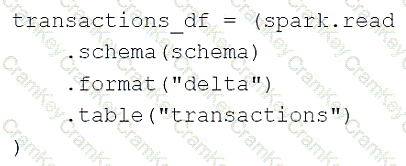

A data engineer is using the following code block as part of a batch ingestion pipeline to read from a composable table:

Which of the following changes needs to be made so this code block will work when the transactions table is a stream source?

A data analyst has created a Delta table sales that is used by the entire data analysis team. They want help from the data engineering team to implement a series of tests to ensure the data is clean. However, the data engineering team uses Python for its tests rather than SQL.

Which of the following commands could the data engineering team use to access sales in PySpark?

A Delta Live Table pipeline includes two datasets defined using streaming live table. Three datasets are defined against Delta Lake table sources using live table.

The table is configured to run in Production mode using the Continuous Pipeline Mode.

What is the expected outcome after clicking Start to update the pipeline assuming previously unprocessed data exists and all definitions are valid?